Our extra-large special edition is here. Subscribe today and receive the 25% longer issue at no extra cost!

Ever play an online game and feel your blood pressure rise over complete frustration with poor sportsmanship, or even worse felt your anxiety spike due to harassment and bullying taking place right before your eyes?

A game is only as strong as the community that supports it, but what happens when a few bad apples disrupt the flow and prevent others from having fun? Most gamers have a story where they’ve experienced griefing or team-killing, or even worse had another player verbally insult them in a way that goes well beyond “trash talk.” In fact, a recent study by anti-bullying organization Ditch the Label reported that 57 percent of the young people it surveyed experienced bullying online while playing games; even more alarming was 22 percent said it caused them to stop playing. Instead of drawing people to games, are more people turning away from them due to these unpleasant social interactions?

Negative experiences playing games online aren’t anything new; you can go back to the earlier days of commercial MMORPGs, such as EverQuest and Ultima Online, and find plenty of examples of these scenarios. A common perception among gamers has been it just comes with the territory if you want to play online, but that doesn’t make it okay. Playing games should bring people together, and as gamers, we all know how powerful these experiences can be. Nobody should have to tolerate hate speech or threats to their safety to simply engage with their hobby online.

This issue has only continued to heat up as more games are evolving and becoming online-centric. The extra emphasis on their social aspects has forced developers to get creative to help encourage players to “play nice.” With more initiatives and efforts in this area, we chatted with leaders across the industry, from developers figuring out solutions to companies that specialize in moderation, to gain insight into the ever-growing and complex issue.

The word “toxic” seems to go hand-in-hand with online gaming and has been used as a way to describe problematic, negative players who go out of their way to make the experience unpleasant for others. Maybe it’s a player who’s purposely throwing a match in Dota 2, or spamming insults in League of Legends’ chat to make someone feel bad about their skills. This is what many developers consider “disruptive behavior” and is the preferred term when discussing these types of individuals.

No matter the phrasing, it still all comes down to one thing: They are getting in the way of how the game is meant to be experienced. Every developer we spoke to for this feature commented on this specifically and why it’s a bummer. “It’s in everyone’s best interest to make playing their games a fun, happy experience because that’s why people go to play these games – they want to have a fun time,” says Overwatch principal designer Scott Mercer.

This also extends to keeping players invested in a gaming experience. If something doesn’t feel fun or pleasant, why stick around? Dave McCarthy, head of operations at Xbox, puts forth a simple comparison to illustrate how important it is that these digital landscapes feel safe and protected: “I just think it’s as simple as, ‘Would you walk into a physical space, anywhere where you face harassment, or are made to feel unwelcome by certain imagery or language that’s used there?’ No, of course you wouldn’t; you get out of that space physically. And the same is true for the digital space.”

When players log into games, they look for the social norms to get an idea of what’s acceptable. Is it a more laid back, jokey atmosphere? Is it composed of serious competitors wanting to get down to business? That’s why it’s extremely important the tone is set early in games and services. Chris Priebe, founder and CEO of Two Hat Security, a company that provides moderation tools, says that a community’s identity forms on day one and that’s why it’s so important for those behind the games to build and inform the culture. “When people launch a game, they need to be thinking about, ‘How am I building the community and putting people in the community?’ I think too often in the game industry it’s just, ‘Launch it and the culture will form itself.’”

Priebe discussed how oftentimes moderation and chat features are thought about far too late in development, without much consideration going into how to shape the community. He compared it to hosting a party and how it takes shape once you set the tone. “If you don’t set a tone, it can go very, very poorly,” Priebe says. “That’s why people have bouncers at the front door. Somehow with games, we don’t think we need to put bouncers at the front door, and we wonder why things go so terribly wrong.”

While this might seem discouraging, in more recent years. Priebe says he has seen an increased effort going into changing this. People across the industry are working hard to find answers, whether that’s more transparent guidelines, better moderation tools, or designing solutions within the game. However, it all comes with time and experience, using the community as a testing ground.

The more people we spoke to about this topic, the more it was clear how complicated and difficult of an issue it is. Most companies are experimenting with different features or tools to see what works, and some are even still deciding where to draw the line between “okay” and “not okay.” “It turns out that calling something toxic is difficult to design for,” says Weszt Hart, head of player dynamics at Riot Games. “It’s difficult to make decisions on, because it’s so subjective. What’s toxic to you might not be toxic to somebody else. Trash talk could be for some people considered toxic, but for others, that’s just what we do with our friends.”

Working on League of Legends, a team-based game that earned quite a reputation for its toxic community, Hart says it was challenging for the team to figure out where to focus to mitigate these issues. To figure out what the community considered “good” and “bad,” Riot presented the now-defunct Tribunal, where players logged in and reviewed cases, deciding if an offender should be disciplined or pardoned. After this, Riot tried encouraging more positive interactions by rolling out the honor system, a way to give your teammate kudos if you thought they did a good job. “But then we realized that all of those systems were after-the-fact, they were all after the games,” Hart explains. “They weren’t helping to avoid potential transgressions. We needed to identify where the problems were actually happening, maybe even before games.”

Enter team builder. “Team builder was looking at addressing, I suppose a way to put it is, a shortcoming of our design,” Hart says. “Because as the community evolved, the concept of a meta evolved with it. Players started telling us how to play and the system wasn’t recognizing their intent, so in an effort to play the way they wanted to play, they were essentially yelling out in chat the role that they wanted. We needed to find a way to help the system, help players play the way they wanted.” Riot created team builder for matches to start out on a better note, as a way to decrease players entering matches already frustrated, which often just increased the chance of negative interactions.

While Riot isn’t the first to deal with players treating each other poorly, the influence of its systems can be seen around the industry. Take Blizzard’s cooperative shooter Overwatch, for example. Overwatch launched back in 2016, and while being considered one of the more positive communities, it dealt with its share of problem players, which game director Jeff Kaplan often had to address in his developer update videos. Kaplan finally put it bluntly: “Our highest-level philosophy is, if you are a bad person doing bad things in Overwatch, we don’t want you in Overwatch.”

Since launch, Overwatch has received several improvements to the game: better reporting tools, an endorsement system encouraging positivity, and most recently, role queue, which took away the extra frustration and bickering that often erupted over team composition. The latter two are very reminiscent of League’s honor system and team builder.

Overwatch is far from Blizzard’s first foray into the world of online gaming, so the team anticipated some issues, but it also charted new territory. “I don’t think we were expecting exactly the sort of behavior that happened after launch,” says senior producer Andrew Boyd. “I know that there were a lot of new things for us to deal with. I think this is one of the first games where we’re really dealing with voice as an integrated part of the game, and that changed the landscape a lot. That said, when we saw it, obviously, addressing those issues became very important to us very quickly, and we started to take steps to make the game a better place for folks.”

While developers can try to catch potential issues ahead of time, most of the time they really don’t expose themselves until the game is up and running. Ubisoft Montreal experienced this first-hand with Rainbow Six Siege, forcing the company to crack down on bad behavior and get creative with its solutions. A player behavior team was created to “focus on promoting the behaviors we hope to see in the game,” says community developer Karen Lee. It’s here that the team worked on Reverse Friendly Fire (RFF) system to help with team-killing. “RFF was first concepted to help contain the impact of players abusing the game’s friendly fire mechanic,” Lee says.

RFF makes it so if you attempt to harm an ally, the damage reverses straight back to you. Since then, Ubisoft has iterated on it to ensure it works on all the different operators and their gadgets. Now, before any new operators go live, the player-behavior team reviews it, trying to determine all the ways they could be used unintentionally by the community to cause griefing. “We also have weekly and monthly reports that go out to the entire team,” Lee explains. “These help everyone gauge the health of the community, and we highlight the top concerns from the week.”

Many different game companies and organizations have been coming together [see The Fair Play Alliance sidebar] to share ideas and work toward change. Even though developers have learned much of what works and what doesn’t, there isn’t a one-size-fits-all solution, as all games are different, whether it’s the audience or genre. “The problem space is too big to look at any particular feature, and say, ‘This is how you do it,’” Hart says. “There aren’t best practices yet for what we’re calling player dynamics, which is the field of design for player-to-player interactions and motivations. Depending on your game and your genre, some things may work better than others.”

Building healthier communities doesn’t just fall on the developers and publishers. Sure, designing different mechanics and improving moderation tools are steps in the right direction, but they also need the community’s help to be successful. It makes sense. The people that play your game make it what it is and know it the best. That’s why more and more developers and companies are depending on their communities to give feedback and self-moderate by reporting bad player behavior. “Everyone needs to be involved,” Priebe says. “The gamers need to say, ‘Look, I’m sick of this.’” Priebe was quick to point out that he thinks most gamers already feel this way but feels more need to put their foot down and be vocal to help shift the culture. “It will take some gamers to say, ‘No, that isn’t cool. You can’t be in our guild unless you have good sportsmanship,’” he says.

Many believe the community should be just as involved with the process as they are when giving feedback on games for betas. “We need to work with our players and say, ‘What do you guys think?’ The same way we do when we develop our games,” says EA community team senior director Adam Tanielian. “We think the same idea should apply to our communities. How do we keep them healthy? And how do we build tools?” EA recently held a summit devoted to building healthier communities to start getting feedback from gamers and devs alike. Born from this was a “player council,” which Tanielian says meets regularly and is similar to the ones they have for their various franchises, but this focuses on feedback for tools, policies, and how EA should categorize toxicity. “We know that we have to take action,” Tanielian says. “We can’t just talk about it and not do something. Some things take longer than others, but there are always things we can be doing. There are always areas that we can be addressing.”

Many people we chatted with discussed how easy-to-use reporting tools have been essential, but players need to be encouraged to use them. If they’re hard to find, require players to visit a website, or are needlessly complex, developers and moderators simply won’t get the valuable information they need. Reporting also helps developers learn what the community values. “The community itself is sort of driving what’s good and what’s not great for it in terms of communication, in terms of that play experience,” Mercer says. “I think the most important thing about the reporting is it’s a way for the community to help police itself, to help determine amongst itself what they find acceptable or not.”

Players often feel more encouraged to report if they know it’s facilitating change. Sure, giving players the ability to mute or block players that rub them the wrong way helps, but once the Overwatch team started following up on reports and letting the players know action was taken, they noticed it led to an increase in reporting. “That was important, reaching out and building that trust,” Mercer says. “Saying, ‘Hey, as a member of the Overwatch community, you are part of the solution to dealing with issues of players acting poorly within a game.’”

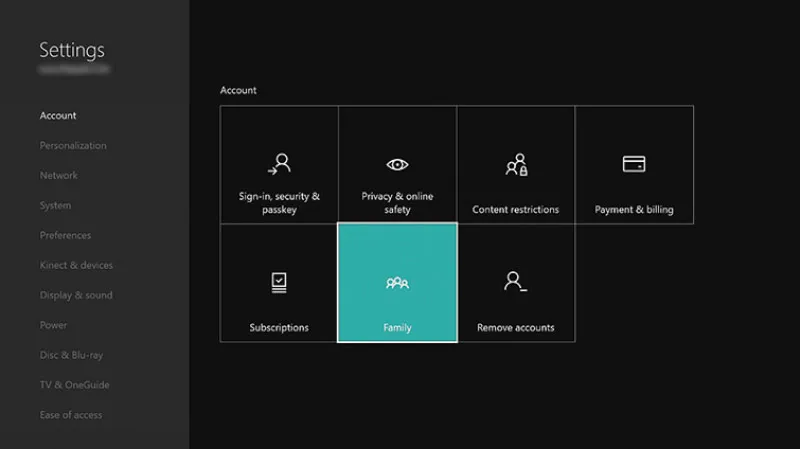

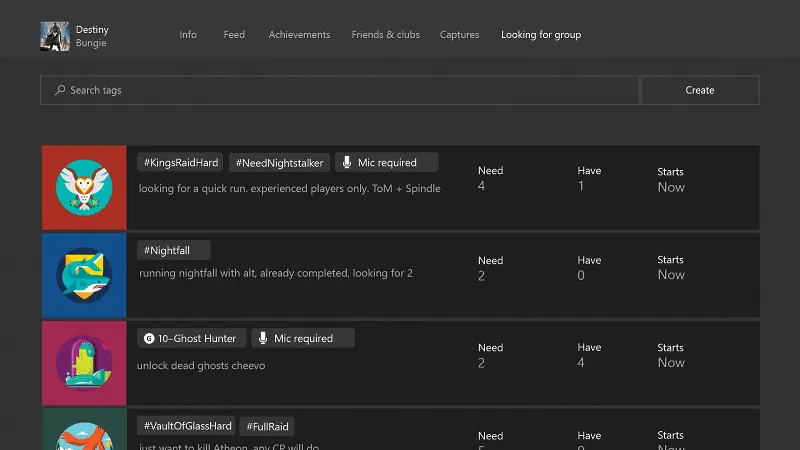

While self-moderation has certainly been key to helping get problem players out of games, Microsoft saw an opportunity to take it one step further. For those who just want to play or converse with like-minded individuals, Microsoft created the “clubs” feature (online meeting spaces) on Xbox One, where people with similar values, interests, and goals can come together. McCarthy says Microsoft has seen great success in this area. “We discovered the strong communities are not only ones where you provide kind of a safe space and a set of norms, but they’re also the ones where they get some degree of self-governance,” he explains.

Microsoft has also used clubs as a testing ground for new moderation features, which McCarthy says are in the works. A long-term goal for Xbox is to give you more choices and tools in how you play. “What I mean is put the dials and sliders ultimately in your hands so that you could decide, ‘Hey, I want to filter out stuff that is detected as harassing-type messages,’ or I’ll be silly, like, ‘I want to filter out the word ‘peanut butter’ and never see the word peanut butter again.’ You could customize down to whatever level you felt was appropriate as a user.”

Involving the community and putting moderation tools in their hands is a step in the right direction, and it’s encouraging to see more companies put forth ways for the community to help. After all, this is too big of an issue to be tackled alone, and it will only grow in complexity as games continue to get bigger and are turning more and more into social activities.

The industry doesn’t get better if it’s not constantly finding new solutions, and many companies are realizing that more needs to be done as our technology grows. “This needs to be a solved problem,” Priebe says. “Because games are [becoming] more and more voice-driven, especially as you need collaboration more than ever. People are realizing that if you have social games, that’s where your friends are.”

While game developers are still behind in this area, there is plenty of hope for the future. “What we are facing in gaming is more of a cultural shift over the last 10 years ... and it is on us to react more quickly than we have in the past to stay ahead of the curve,” says Rainbow Six Siege community developer Craig Robinson. “Right now, we are playing catch-up, and that’s not where we need to be in order to get toxicity under control. I expect for there to be a ton of improvements over the next 5-10 years across the industry, especially with the various publishers and developers sharing their learnings and insights through the Fair Play Alliance.”

And plenty of people tackling this issue have already been thinking ahead. Right now, we’ve depended largely on reactive measures to moderate people. The problem with that is it’s after the fact, as in the damage is already done. Many have an eye toward being more proactive, which means trying to anticipate problems before they happen, whether that’s designing to combat them or depending more on filters and A.I. “I think one of the biggest challenges is being stuck in the ways we’ve done things before,” Hart says. “We have the social needs increasing for players online; we need to think of our games differently. We need to be much more proactive. If we wait to have a game to be thinking about how people may interact with each other within that game, we’re already behind because then we have to retrofit systems onto an existing game as opposed to proactively designing to reduce disruption and to help produce those successful interactions. A short way of putting it is we need to move from punitive to proactive.”

What’s encouraging is that technology is only going to get better, and many feel optimistic that A.I. will be a great asset in moderation going forward. “A.I. is something that could really be a difference-maker with regards to how we’re able to moderate and how we’re able to enforce its scale across the community,” Tanielian says. Companies like Microsoft have already been investing in this area by trying to get as much data as possible to ensure the A.I. is accurate. “There’s actually goodness in those models getting trained more and more by more data,” McCarthy says. “As an example, we’ve done something called ‘photo DNA’ at Microsoft, where we tag certain images and we actually share that database with a large range of other companies. This is where I think collaboration is actually really important in the industry. Because if we can start to share some of these models and learning, then they get more sophisticated and accurate, and they actually can help a larger range of users overall. That’s just something we have to keep chipping away at: How do we utilize powerful technology like that in the right way? And to get it trained broadly across the industry to do the things we want it to do?”

These are big questions, but they’re the ones we can’t afford to leave unsolved, as we’re spending more and more time in these online spaces.

This article originally appeared in the November 2019 issue of Game Informer.

Explore your favorite games in premium print format, delivered to your door.